The "Dead Internet Theory" Is Becoming Reality And the Numbers Prove It!

The Dead Internet Theory is no longer fringe; bots now outnumber humans online. Here's what the data says and what it means for the web's future.

COMPANY/INDUSTRYAI/FUTUREA LEARNINGSPACE/TECH

Sachin K Chaurasiya

3/4/202613 min read

When a Fringe Theory Becomes a Documented Fact

There was a time when the Dead Internet Theory lived in the dark corners of forums like 4Chan and Agora Road's Macintosh Café, dismissed as paranoid, science-fiction-adjacent thinking for people who spent too much time online. The premise seemed too bleak to be true: that the internet you were scrolling through wasn't really a human space anymore but a synthetic landscape of bots, automated content, and algorithmically curated illusions designed to keep you engaged without ever actually connecting with another real person.

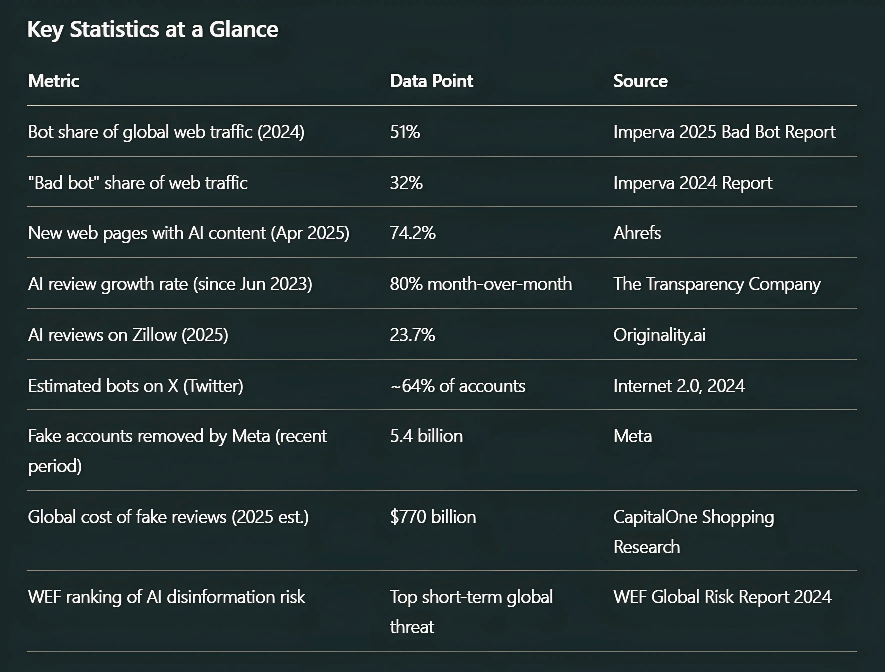

That was the conspiracy. Here is the 2025 reality: bots now account for 51% of all global internet traffic. AI-generated articles surpassed human-written content for the first time in late 2024. Nearly 74% of newly published web pages analyzed in April 2025 contained AI-generated content. And even the man whose company built the tools enabling this transformation publicly acknowledged the problem he helped create.

The Dead Internet Theory is no longer a theory. It's a report with citations.

What Is the Dead Internet Theory? (And Why It Matters Now)

The Dead Internet Theory is the claim that, since approximately 2016, genuine human activity on the internet has been systematically displaced by automated bots, algorithmically curated feeds, and artificially generated content, creating a digital environment that performs liveliness while hollowing out authentic human connection.

The theory has two core components:

The Observable Layer: Organic human interaction has been overwhelmed by bots, AI-generated content, and engagement-farming automation. What you see online the likes, the comments, the viral posts, even the search results is increasingly produced by machines mimicking humans, not humans themselves.

The Conspiracy Layer: This displacement is coordinated by government actors, tech platforms, and intelligence agencies to manipulate public opinion and reduce genuine discourse. This is the part that remains speculative and conspiratorial.

The reason this theory is surging back into mainstream conversation in 2025 and 2026 isn't the conspiracy layer. It's because the observable layer now has hard data behind it, and that data is difficult to argue away.

The Data That Changed Everything

Bots Have Officially Outnumbered Humans Online

The single most consequential data point of the past decade in internet history is this: according to Imperva's 2025 Bad Bot Report, automated systems accounted for 51% of all web traffic in 2024. For the first time on record, non-human activity surpassed human activity on the internet as a whole. Human browsing and genuine interaction now represent the minority of online activity at just 49%.

This wasn't a sudden spike. The trajectory has been building for years:

2016: Imperva's early report found bots responsible for 52% of web traffic, the first time machines surpassed humans.

2018: 37.9% of all internet traffic was bot-generated (Distil Networks).

2022: Roughly half of all internet traffic was already attributed to bots.

2023: Imperva's analysis found automated traffic at 49.6%.

2024: Bots crossed the 51% threshold officially, more than human traffic, according to the 2025 Bad Bot Report.

What changed between 2018 and 2024? Generative AI. The arrival of large language models (LLMs) like ChatGPT gave anyone, anywhere, the ability to produce vast amounts of human-sounding content at near-zero cost. The floodgates didn't just open; they were removed entirely.

Bad Bots: The Malicious Undercurrent

Not all bot traffic is benign. Imperva's 2024 report found that "bad bots," automated programs designed for click fraud, data scraping, spam campaigns, and disinformation, accounted for 32% of all internet traffic, a 5% increase over the prior year. Nearly one-third of everything happening on the internet is not just automated but actively hostile.

A February 2025 paper in the Asian Journal of Research in Computer Science described major social platforms as "machine-driven ecosystems," stating that bots generate between 40% and 60% of web traffic and are routinely deployed to inflate metrics like likes, shares, and comments, creating what the researchers called "the illusion of vibrant online engagement."

The illusion of vitality. That phrase might be the most precise description of the Dead Internet's core phenomenon.

AI Content Has Surpassed Human Content

The bot traffic problem is inseparable from the content generation crisis. Consider:

Analytics firm Graphite confirmed that AI-generated articles surpassed human-written work for the first time in late 2024.

Ahrefs analyzed 900,000 newly published web pages in April 2025 and found 74.2% contained AI-generated content. Only one in four new pages was purely human-written.

According to The Transparency Company, AI-generated reviews have been growing at 80% month-over-month since June 2023.

A study by Originality.ai found that in 2025, 23.7% of real estate reviews on Zillow were likely AI-generated, up from just 3.63% in 2019.

Capital One Shopping Research estimates that fake reviews will cost the average e-commerce customer $125 this year, with global fraud from fake reviews totaling approximately $770 billion.

In 2024, Google publicly acknowledged that its search results were being inundated with websites that "feel like they were created for search engines instead of people." The company that once promised to organize the world's information was admitting, in corporate-speak, that a significant portion of that information was machine-manufactured garbage.

How We Got Here: The Architecture of a Dead Internet

Understanding how the internet reached this state requires understanding the incentive structures that made it inevitable.

The Engagement Economy Created the Perfect Environment for Bots

Social media platforms were built on an engagement economy, one where every like, comment, share, and view had monetary value. Advertisers paid for eyeballs; platforms optimized for attention. This created an obvious financial incentive: anything that looks like engagement has value, whether or not it comes from a real human.

As sociologist Alex Turvy told Decrypt in late 2025, "Humanness has become just another signal to fake in order to make money. What's missing now is the mess that used to prove someone was real."

Bots designed to generate fake engagement were financially incentivized from the beginning. And as AI improved, the cost of deploying convincing fake accounts dropped to near zero.

The LLM Acceleration

The public release of ChatGPT in November 2022 was an inflection point. Prior to this, AI-generated content was the domain of tech companies and well-resourced bad actors. After ChatGPT, anyone with an internet connection could produce essays, social media posts, product reviews, blog articles, and even code at an industrial scale.

The results were immediate and dramatic. Content mills began churning out thousands of SEO articles per day using LLMs. Social media accounts began automating their posting schedules entirely. Review platforms were flooded. YouTube was saturated with AI-voiced content over stock footage. And crucially, the bots creating this content were getting better at passing as human.

Timothy Shoup of the Copenhagen Institute for Futures Studies warned in 2022 that if large language models proliferated unchecked, 99% to 99.9% of all internet content could be AI-generated by 2025 to 2030. At current growth rates, that prediction looks less like alarmism and more like a trajectory.

The "Shrimp Jesus" Problem: Engagement Farms at Scale

Perhaps no example better illustrates the Dead Internet in action than the viral "Shrimp Jesus" phenomenon of 2024. Facebook was flooded with surreal AI-generated images depicting Christ-like figures fused with shrimp, crustaceans, and kittens posts that went viral with thousands of likes and comments. The comment sections were filled with hundreds of near-identical responses like "Amen" from what were clearly bot accounts.

Meaningless content, produced by AI, amplified by botnets, and rewarded by engagement algorithms. The machine had learned to feed itself.

This wasn't an isolated curiosity. On X (formerly Twitter), a 2024 study by Internet 2.0 using AI analysis tools predicted that approximately 64% of all accounts on the platform are likely bots. An earlier estimate from Yofi.ai suggested 24% to 37% of X's daily active users were bots, numbers the platform itself has always disputed.

Facebook, for its part, deactivated 5.4 billion fake accounts in a recent period, up from 3.3 billion removed in 2018. The platform acknowledges approximately 5% of its regular user base still consists of fake accounts. Given a user base approaching 2.5 billion, that's roughly 125 million fabricated presences in the social graph we all navigate.

The Disinformation Dimension: Bots as Political Infrastructure

The Dead Internet Theory's "conspiracy layer" becomes significantly less conspiratorial when you examine documented disinformation campaigns.

In 2016, researchers analyzing 14 million tweets found that bots were significantly involved in amplifying articles from unreliable sources during the U.S. presidential election and Brexit referendum, with high-follower bot accounts legitimizing misinformation that real users then reshared in good faith.

More recently, a coordinated pro-Russian disinformation campaign on X deployed more than 10,000 bot accounts to post tens of thousands of messages attributed to fabricated U.S. and European celebrities, spreading pro-Kremlin narratives about the war in Ukraine. In Poland, the National Security Bureau documented a "bot army" that generated 33.9 million comments and nearly 40,000 pieces of content on polarizing topics in just the first months of 2024.

The World Economic Forum's 2024 Global Risk Report identified AI-driven disinformation as a top short-term threat to global stability, placing it above economic risks for the near term.

What makes this different from earlier propaganda is that it's now scalable at negligible cost. One person, one laptop, one API key, and access to an LLM is all it takes to run an influence campaign that would have required an entire institutional apparatus a decade ago.

The Model Collapse Problem: A Self-Eating Machine

There is a consequence of AI-generated content that researchers are only beginning to fully reckon with, and it may be the most catastrophic long-term development of all: model collapse.

Here's how it works. The current generation of AI models was trained primarily on human-written content from the pre-2023 internet, the last large reservoir of "clean" human data. As AI-generated content floods the web, the next generation of models will inevitably train on that synthetic content. Each generation introduces small statistical distortions. Outputs become increasingly homogeneous, less diverse, and more prone to hallucination. The AI starts to summarize AI summarizing AI, with each layer compressing and distorting the original signal.

YouTube engineers reportedly worried about a version of this problem they called "the Inversion," the possibility that their bot-detection algorithms would start treating fake views as the default and real human views as anomalous.

As one researcher noted, sociologist Das framed it bluntly: "Chatbots and AI tools summarize that material and hand it back to you. You end up reading machines summarizing other machines."

The 2024–2025 period has already been called the "Slop Era," a term for the flood of low-quality, formulaic AI content that has poisoned the training data for every model being built today. The pre-2023 clean internet is, in a very real sense, gone.

The Human Migration to Walled Gardens

If the open web is becoming an automated wasteland, where are real humans going?

The evidence suggests they're retreating behind paywalls and invitation-only spaces. Substack reached a $1.1 billion valuation with over 5 million paid subscriptions and 100 million monthly visits from people voluntarily paying to read content they can be reasonably sure a human wrote. Private Discord servers, paid newsletters, closed Slack communities, and friend-only social feeds are all growing.

"There are very few people writing blogs anymore," researcher Das told Decrypt. "You can't get discovered, and if you do, people assume it's AI. Most of the conversation now happens inside platforms built for performance, not honesty."

The authentic human web is migrating inward smaller, more intimate, more verified, and increasingly paywalled. The public-facing internet, meanwhile, becomes a more automated, synthetic, and impersonal space.

This is itself a kind of irony: the open internet, which began as a democratic public commons, is now so overrun by automation that genuine human connection requires subscription fees and gated communities.

What the Platforms Are (and Aren't) Doing

It would be inaccurate and unfair to suggest platforms are simply watching this happen. The fight against bot infestation and AI-generated spam is real, expensive, and ongoing.

Google has rolled out a series of aggressive Core Updates, including significant updates in March and August 2024 explicitly designed to demote unoriginal, unhelpful, and AI-generated content created primarily to game search rankings. The company's Panda update in 2011 targeted content farms; today's updates target AI content farms operating at an incomparably higher scale.

Meta continues to remove billions of fake accounts annually.

X has explored requiring payment for membership specifically to disrupt bot economics.

TikTok, paradoxically, began offering virtual AI influencers to advertising agencies in 2024 essentially institutionalizing a practice that critics say accelerates the dead internet dynamic.

Blockchain-based "proof of personhood" systems are also emerging. Projects like World (formerly Worldcoin), Proof of Personhood, and Human Passport (formerly Gitcoin) are attempting to create verified human identities that can be tied to online activity, creating a layer of cryptographic proof that you are, in fact, a real human being.

The irony of needing blockchain to prove you're human on a network built by humans is not lost on anyone.

Is the Internet Actually "Dead"? The Nuanced Reality

To say the internet is "dead" is an overstatement. Human activity hasn't vanished; it's been diluted and drowned out. The distinction matters.

Researcher Alex Turvy's framing is instructive: the concern isn't that fewer people are online. It's that automated activity is eroding the basic cues people use to tell who's real. When machines can convincingly mimic human signals the slightly awkward phrasing, the typos, and the personal anecdotes, users begin to doubt everyone. Trust erodes not because humans are absent, but because authenticity becomes impossible to verify.

And Meta itself reported that AI-generated misinformation had a "modest and limited" impact on elections in 2024 suggesting that while the scale of synthetic content is alarming, its direct persuasive power may be overstated. The deeper damage may be more subtle: not that AI convinces people of specific falsehoods, but that it corrodes the generalized trust required for any information to be believed.

The internet isn't dead. It's suffering from something more insidious: a slow erosion of trust, signal, and authenticity that makes it increasingly difficult to navigate as a genuinely informative or connective space.

What This Means for You: Practical Implications

For content creators: The pressure to distinguish human-made work has never been greater. Authenticity, personal narrative, lived experience, and genuine expertise are now differentiators, not just aesthetic choices. Your humanity is your competitive advantage.

For consumers: Practice healthy skepticism. Before trusting a review, a news article, a social media profile, or a comment, consider the source, the platform, and the incentive structure behind it. AI-generated content isn't inherently bad, but content designed to deceive is.

For businesses: Fake reviews and inflated engagement figures are a ticking liability. The economics of synthetic credibility are increasingly fragile, and platforms are tightening detection. Build real audiences.

For society: The Dead Internet Theory's most important warning isn't about bots; it's about the infrastructure of trust. Democratic discourse, market decisions, and personal relationships increasingly depend on the reliability of information we encounter online. If that infrastructure is fundamentally corrupted, the downstream effects are severe and hard to reverse.

The Dead Internet Theory began as a conspiracy. It has matured into a documented crisis.

When more than half of all internet traffic is non-human, when nearly three-quarters of new web content contains AI-generated text, and when the CEO of the world's most prominent AI company publicly acknowledges the problem he helped accelerate, the gap between "theory" and "observable reality" has effectively closed.

What remains is a set of genuinely hard questions that no algorithm, platform update, or policy paper has yet answered: How do we rebuild trust in digital information? How do we preserve the signal of authentic human experience in a network increasingly designed to simulate it? And what does it mean for democracy, culture, and human connection when the public commons of the internet becomes a machine-tended performance rather than a real gathering place?

The internet isn't dead. But something irreplaceable about what it used to be the rough, human, discoverable quality of stumbling across a real person's real thoughts is being buried under an avalanche of synthetic approximations.

Keeping it alive requires us to notice. To ask who wrote what we're reading. To pay for the writers and communities worth paying for. To show up as humans and insist that it matters.

FAQ's

Q: What is the Dead Internet Theory in simple terms?

The Dead Internet Theory is the idea that, since around 2016, most of what you see on the internet posts, comments, articles, and profiles is generated by automated bots and AI rather than real humans. The "feel" of a busy, active internet is increasingly artificial.

Q: Is the Dead Internet Theory actually true?

The conspiracy elements of the theory (government coordination to control the population) remain unproven. However, the observable core that bots now generate more than half of internet traffic, and AI-generated content has surpassed human-written content is now supported by multiple independent data sources, including Imperva's 2025 Bad Bot Report and analytics from firms like Graphite and Ahrefs.

Q: What percentage of internet traffic will be bots in 2025?

According to Imperva's 2025 Bad Bot Report, automated systems accounted for 51% of all global web traffic in 2024. Of that, 32% was classified as "bad bot" traffic automated programs engaged in malicious activity like spam, scraping, and manipulation.

Q: What is "AI slop," and how does it relate to the Dead Internet?

"AI slop" is informal shorthand for the flood of low-quality, formulaic, AI-generated content produced primarily to generate traffic and ad revenue rather than genuinely inform or entertain readers. It's a key driver of the Dead Internet phenomenon because it degrades search quality, drowns out human voices, and corrupts the training data used to build the next generation of AI models.

Q: What percentage of social media accounts are bots?

Estimates vary significantly by platform and methodology. A 2024 study predicted roughly 64% of X (Twitter) accounts are likely bots. Meta acknowledges approximately 5% of its active user base are fake accounts (equating to roughly 125 million fake profiles). Researchers broadly estimate bots represent 24% to 60% of activity on major platforms, depending on the metric measured.

Q: Is AI-generated content taking over Google search results?

Google itself acknowledged in 2024 that search results were being "inundated with websites that feel like they were created for search engines instead of people." Ahrefs' April 2025 analysis found 74.2% of newly published web pages contained AI-generated content. Google has responded with a series of Core Updates targeting AI-generated spam, though the arms race between detection and generation continues.

Q: What can I do to avoid AI-generated misinformation?

Prioritize primary sources over aggregators. Cross-reference claims across multiple independent outlets. Look for specific, verifiable details that AI tends to smooth over or fabricate. Favor platforms and communities where identity verification is stronger. Pay attention to whether the voice sounds genuine or generically competent; AI writing often lacks specific personal experience.

Q: What is "model collapse," and why does it matter?

Model collapse is the risk that AI systems trained on AI-generated content will progressively degrade in quality, producing outputs that are more homogeneous, less accurate, and more prone to hallucination. It's a compounding problem: as the web fills with synthetic content, future AI training data becomes increasingly "contaminated," and each new generation of models is less reliably grounded in genuine human knowledge.

Q: Where is authentic human activity on the internet moving?

Evidence suggests genuine human community is migrating to paid newsletters (platforms like Substack), private Discord and Slack communities, closed social groups, and verified-identity platforms. Public-facing social media is increasingly performance-driven and bot-saturated; intimate, invite-only, or paid spaces are where authentic conversation is retreating.

Q: Did Sam Altman acknowledge the Dead Internet Theory?

Yes. In September 2025, OpenAI CEO Sam Altman posted, X: "I never took the dead internet theory that seriously, but it seems like there are really a lot of LLM-run Twitter accounts now." The post went viral and reignited mainstream discussion about the theory's real-world validity, with particular irony given that Altman's own company builds the tools at the center of the phenomenon.

Subscribe To Our Newsletter

All © Copyright reserved by Accessible-Learning Hub

| Terms & Conditions

Knowledge is power. Learn with Us. 📚